The Missing Half of Spatial Intelligence

In this post, I argued that AI needs to wake up to the real world. This week, the industry woke up with it.

The news cycle has been dominated by “Spatial Intelligence.” Fei-Fei Li’s startup, World Labs, is reportedly in talks for a $5 billion valuation just months after emerging from stealth. Simultaneously, they announced their “World API” - a tool for programmatically generating explorable 3D worlds.

This is a massive validation of the thesis we have held for years: AI’s next great leap is spatial, not just textual.

But for operators of physical spaces - hospitals, venues, campuses - there is a critical distinction between a generated world and the real one.

Simulation vs. Reality: The $5 Billion Gap

The “World API” promises to let AI imagine and reconstruct 3D environments with physics and consistency. This is groundbreaking for training robots or creating simulations. But a perfect 3D model of a hospital corridor is useless to an operational AI if it doesn’t know that a trolley is blocking it right now.

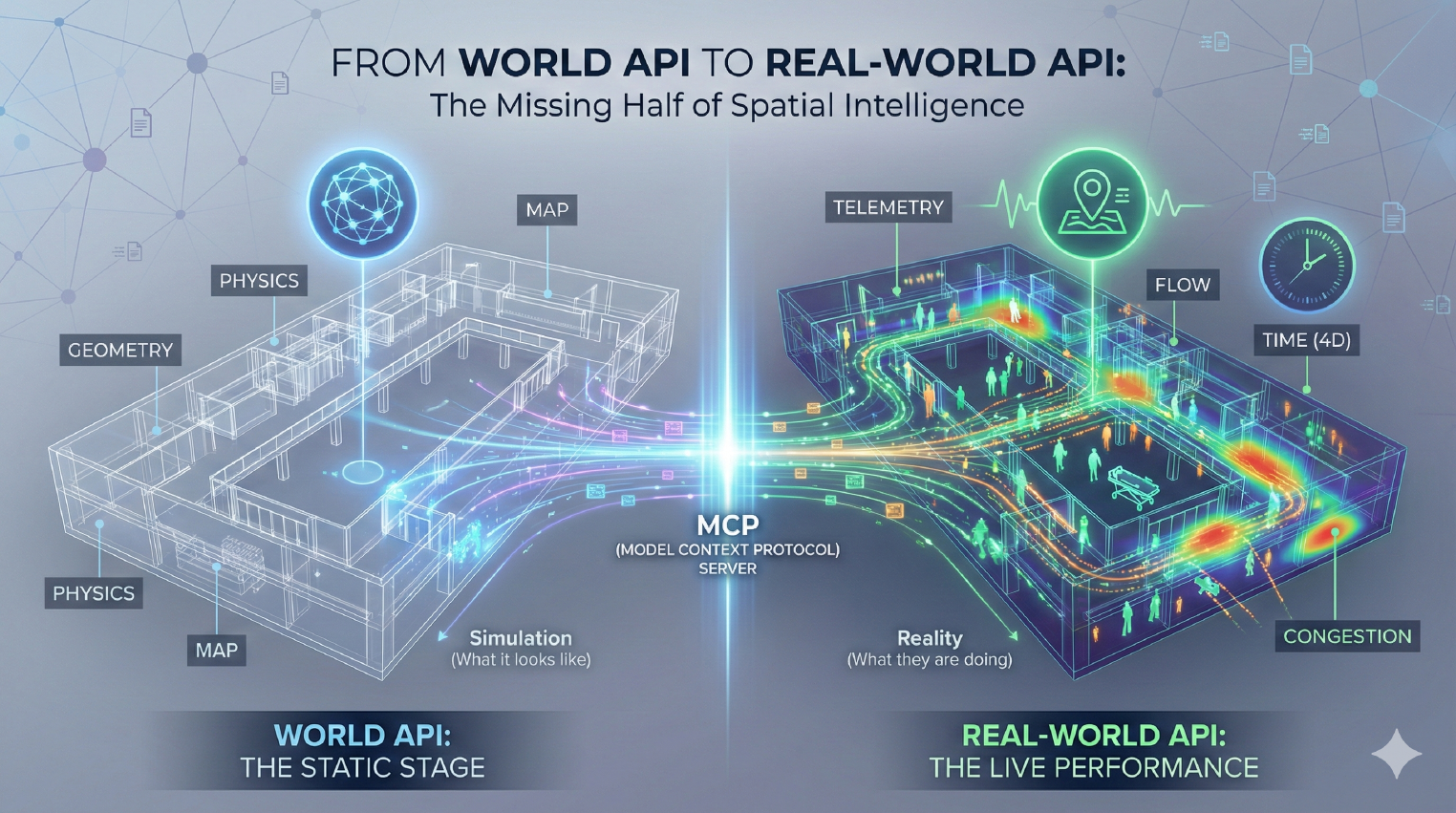

We are seeing a rapid divergence in “Spatial Intelligence” into two necessary layers:

- The Static Stage (World Models): What the environment looks like. This is what World Labs and others are building - the geometry, the physics, the “map.”

- The Live Performance (Telemetry): What the actors are doing. This is where Crowd Connected lives - the positioning, the movement, the flow.

An AI agent responsible for managing a building cannot rely on the stage alone. It needs the performance.

DeepMind Gets It: Time is the Fourth Dimension

It’s not just World Labs. Google DeepMind just released D4RT, a new model explicitly designed for “4D scene reconstruction and tracking.”

Note the emphasis: Tracking.

DeepMind’s research acknowledges that understanding geometry isn’t enough; you must understand motion over time. A map is a snapshot; reality is a stream. As I wrote previously, real-time data is useful, but patterns make it valuable. The ability to track movement through space and time is what turns a static digital twin into a live operational tool.

From “World API” to “Real-World API”

This brings us back to the practical reality for organisations. You don’t need an AI that can hallucinate a new floorplan; you need an AI that knows how your existing floorplan is being used this second.

That is why we are building the MCP (Model Context Protocol) Server for spatial data.

If World Labs provides the World API (the container), Crowd Connected provides the Real-World API (the content).

- The World API tells the AI: “This is a corridor, 3 metres wide.”

- The Real-World API tells the AI: “This corridor is currently 90% congested, and the cleaning team hasn’t visited in 4 hours.”

Why This Matters Now

The capital flooding into World Models confirms that the interface between AI and the physical world is the new frontier. But “Spatial Intelligence” without live data is just a very expensive video game.

For decision-makers in smart buildings and events, the roadmap is clearing up:

- Don’t wait for the perfect “World Model” to solve your problems.

- Start capturing the “Real-World” data now. The historical patterns of how people and assets move through your space will be the training data that makes your future AI agents smart.

The “World API” is coming. Make sure your organisation has the “Real-World API” ready to plug into it.

What to Do Now

- Audit your “Live” visibility: Do you have a digital twin that sits dormant? How are you feeding it live position data?

- Review your tracking infrastructure: Are you relying on static counts, or do you have the granular tracking needed to feed an AI agent?

- Watch the “MCP” space: The ability for AI to query your physical space via API will be the standard for operations by 2027.